Loading...

Loading...

Every GRC vendor now claims AI capabilities. Here's how to cut through the noise and evaluate what actually matters when assessing AI features in governance, risk and compliance software.

Open any GRC vendor's website right now. Count the seconds before you see "AI-powered" or "intelligent automation" or "machine learning-driven insights."

It won't take long.

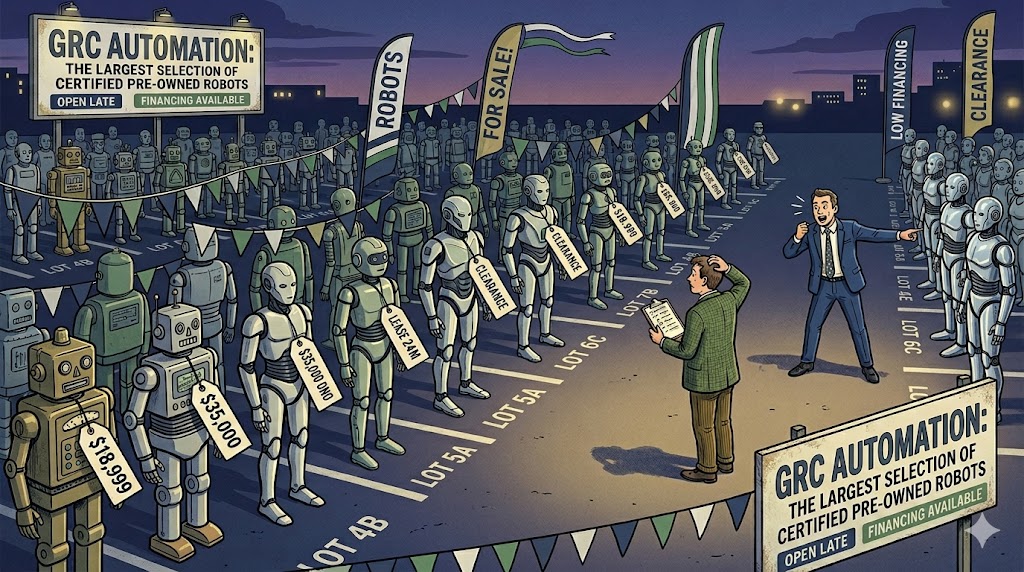

AI has become the universal qualifier in enterprise software marketing. And GRC is no exception. Every vendor, from the enterprise platforms to the startups, is racing to bolt AI features onto their product. Some of it is genuinely useful. A lot of it is noise.

For buyers trying to evaluate GRC software, this creates a real problem. How do you tell the difference between AI that solves your problems and AI that solves the vendor's marketing problem?

Here's a framework for cutting through it.

The first mistake buyers make is evaluating AI features in isolation. "Does it have AI?" is the wrong question. The right question is: "What specific job does this AI help me do better?"

In GRC, the jobs that matter are well-defined. Identifying risks. Mapping controls to regulations. Collecting evidence. Monitoring compliance status. Reporting to the board. Managing third-party risk.

For each of those jobs, AI could theoretically help. But the value varies enormously depending on implementation. A risk identification tool that suggests potential risks based on your industry and regulatory environment? Potentially very useful. An AI chatbot bolted onto the help desk? Not moving the needle on your compliance posture.

When a vendor shows you an AI feature, ask: "Which job does this serve, and how does it perform compared to doing that job manually?"

Not all AI implementations are created equal. In our experience evaluating GRC platforms, AI features tend to fall into three tiers.

This is the most common and often the most valuable. It includes:

What to test: Ask the vendor to show you the accuracy rate. How often does the AI get the mapping right without human correction? What happens when it gets it wrong? Is there a human-in-the-loop validation step?

More sophisticated, and harder to evaluate:

What to test: Ask for examples with real (anonymised) customer data. How far in advance did it flag an issue? What was the false positive rate? Did anyone actually act on the insight?

The newest and most hyped category:

What to test: Try to break it. Ask ambiguous questions. Ask about edge cases. Ask it to generate a report and then verify every claim against the underlying data. The gap between demo and reality is widest in this tier.

Watch out for these when evaluating:

1. "AI-powered" with no specifics. If the vendor can't explain exactly what the AI does, what model it uses, and where human oversight sits, it's marketing, not a feature.

2. No accuracy metrics. Any AI feature that makes recommendations or classifications should come with measurable accuracy data. If the vendor can't share this, they either haven't measured it or don't like the numbers.

3. Your data training their models. Ask explicitly: does customer data get used to train or fine-tune the AI models? What are the data residency implications? In a compliance tool, this matters more than in most categories.

4. AI that replaces human judgement on material decisions. Risk assessment, control effectiveness, compliance status: these are judgement calls with real consequences. AI should inform these decisions, not make them autonomously. If a vendor pitches full automation of material risk decisions, be sceptical.

5. Features that only work at scale. Some AI capabilities need large datasets to be useful. If you're a mid-market company with 200 risks and 50 vendors, will the AI features actually add value, or are they designed for enterprises with 10,000 data points?

Strip away the marketing and the evaluation becomes straightforward. For each AI feature, ask:

The best AI features in GRC are the ones you barely notice. They quietly reduce manual work, surface things you would have missed, and keep humans in the loop for the decisions that matter.

The worst ones are the ones that sound impressive in a demo and create more work when you actually try to use them.

This is one of the areas where independent testing matters most. Vendors will always present their AI features in the best light. They'll choose the demo scenario that works perfectly. They'll quote accuracy numbers from controlled environments.

At Applied Verdict, we test AI features the way a buyer would actually use them. With real scenarios, edge cases, and the kind of messy data that exists in actual organisations. Because the question isn't "does this AI feature exist?" It's "does this AI feature do the job?"

That's a much harder question to answer from a demo.

Applied Verdict provides independent, structured assessments of GRC software. We evaluate AI features against the Jobs to be Done that matter to buyers, not vendor marketing claims. See how we test.